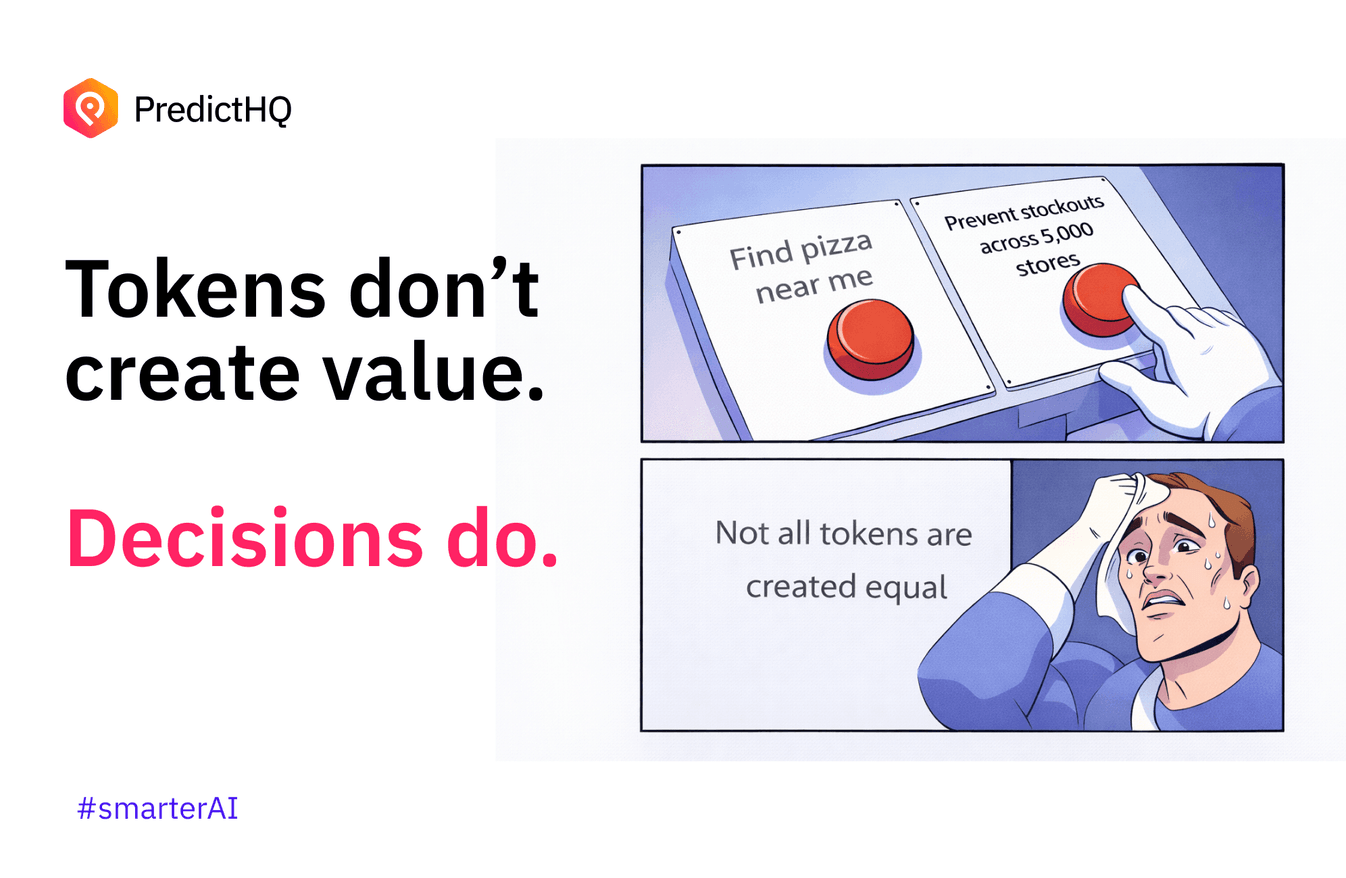

Not all Tokens are Created Equal

Most AI is priced on tokens. But tokens don’t create value, decisions do.

Cost per token, cost per request, cost per million tokens. It’s simple, scalable, and easy to benchmark. But it completely ignores where the real value actually sits.

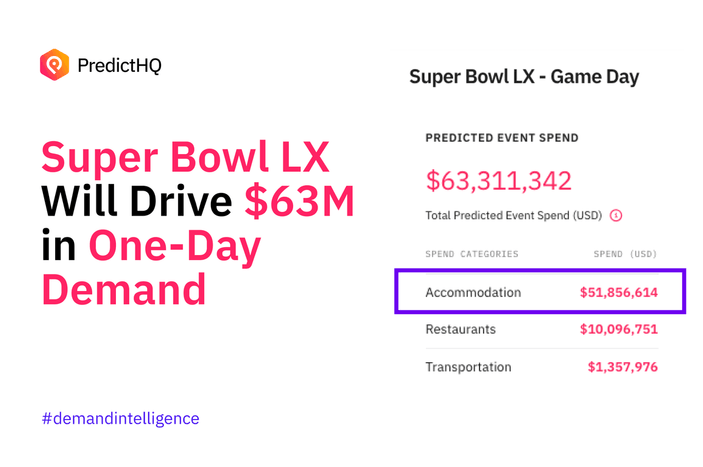

Right now, a token that answers “I’m in San Francisco tonight, where should I go for pizza?” is effectively treated the same as a token that influences pricing, staffing, or inventory decisions across 5,000 stores. Those two things are not comparable. One is convenience. The other drives hundreds of millions in measurable business outcomes.

As Dario Amodei pointed out, some outputs are worth cents, while others can be worth millions. The difference isn’t the model. It’s what the output actually changes.

The industry is optimizing for the wrong thing

We’ve built an AI economy that optimizes for output, not outcome. Most AI systems today are built and priced around efficiency:

- Speed of response

- Volume of tokens

- Cost reduction

That works well for horizontal use cases like summarization, support, and general reasoning. But it breaks down as soon as AI is used to drive real decisions inside a business.

Enterprises don’t care about tokens. They care about outcomes.

A low-cost output that doesn’t change anything is just low value. More importantly, a low-cost output that leads to the wrong decision is extremely expensive. On the flip side, a trusted and explainable output that improves forecast accuracy, aligns staffing to real demand, or prevents lost revenue is worth orders of magnitude more, regardless of how many tokens it used.

The gap: tokens vs decisions

The core issue is that the industry is still valuing generation, not impact.

The greatest value of AI isn’t in producing text. It’s in changing what happens next.

That means the unit of value shouldn’t be tokens. It should be decisions. Specifically:

- Did the output improve the decision?

- Did it reduce uncertainty?

- Did it drive measurable ROI?

If the answer is no, the token has very little value. If the answer is yes, the token is dramatically underpriced.

Why most models fall short

Models are trained on the past. Businesses operate in the present. Even as models improve, they are still fundamentally limited by what they know.

They are trained on historical data and patterns, but they don’t have native awareness at scale of what is happening in the real world right now and how that connects to business impact. That creates a structural blind spot.

They don’t know:

- A cluster of events is happening 0.3 miles from your highest-performing store

- A school holiday is about to shift demand across a region as parents change travel and purchasing patterns

- A hurricane is about to disrupt supply chains and push demand into areas outside the impact zone

So they generate outputs that look right based on the past, but miss what is actually driving demand in the present for a specific business, where demand is as unique as a fingerprint.

Where this starts to change

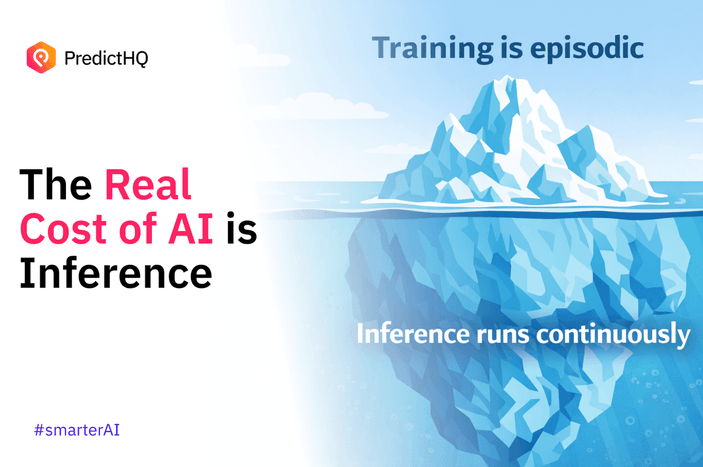

In our experience, the step-changes come first in training the model to understand real-world context. Although significantly impactful, this is episodic. So the second step-change comes from improving the accuracy, trust and explainability of them at inference or decision time.

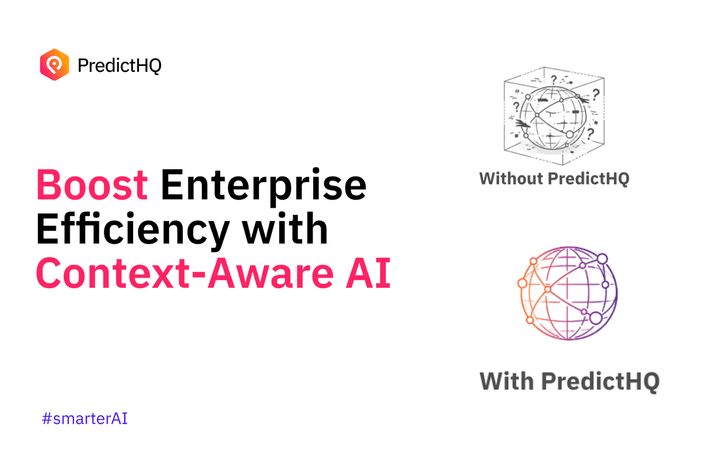

When you introduce verified context about what’s actually happening in the world, models stop relying purely on historical patterns and start adapting to current conditions. Empowering their users to make decisions grounded in reality.

That means they can:

- Understand the external drivers behind demand

- Adjust predictions at inference time

- Produce outputs that are grounded in what’s happening right now

At PredictHQ, this is the platform we’ve built.

Verified, relevant, real-world context that plugs directly into forecasting models, AI systems, and operational workflows, improving what those systems are basing their outputs on.

So instead of guessing from history, systems can reason with context and explain this to their user with specificity.

And that’s the difference between an answer that sounds right and a decision that actually is right.

Where this is heading

Foundation models will continue to improve and commoditize baseline capabilities. Generating answers will get cheaper and faster. However, that only makes this problem more obvious.

As generation becomes abundant, the value shifts to:

- Proprietary data

- Real-world context

- Decision impact

The companies that win won’t be the ones generating the most tokens. They’ll be the ones whose outputs consistently lead to better decisions.

The bottom line

A token that helps you find a pizza place is useful.

A token that helps you make the right commercial decision across 5,000 stores is valuable.

The industry is still pricing both as if they are the same. That won’t hold.

Because ultimately, tokens don’t have value. Decisions do.