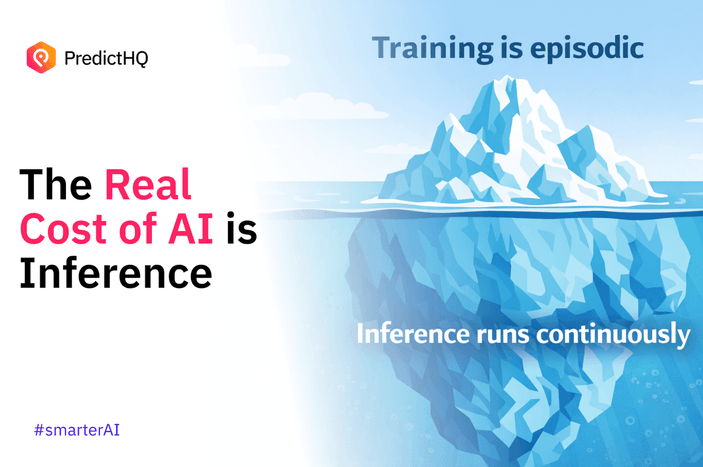

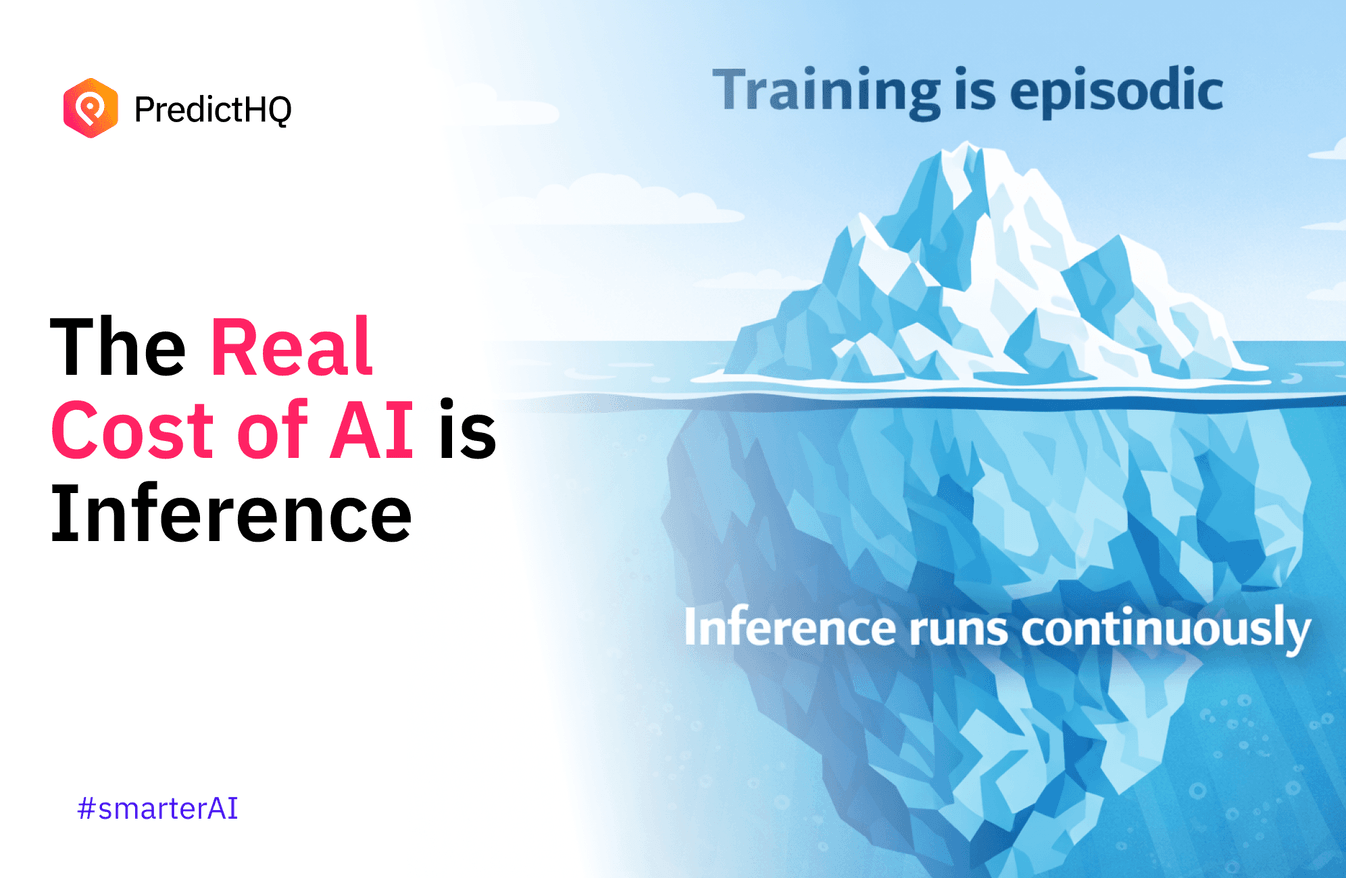

AI's Real Cost Isn't Training. It's Inference.

For years, the AI conversation centered on training. Bigger models. More GPUs. Larger datasets. Massive capex headlines.

But as enterprise AI moves from experimentation into production, the cost profile is shifting.

Training is episodic. Inference runs continuously.

Forecasts refresh daily. Agents execute workflows. Pricing models update in real time. Recommendations adjust per user, per store, per city.

And every one of those calls is inference.

The Economics Are Changing

Multiple signals point in the same direction:

- NVIDIA’s data center revenue growth is increasingly driven by inference workloads, not just training clusters.

- Microsoft and Google have both highlighted rising inference costs as AI products scale in production environments.

- OpenAI has publicly discussed the heavy infrastructure costs required to serve models at scale, where usage, not training, drives recurring expense.

- Software companies are shifting to consumption and value-based pricing models to better align revenue with inference-driven costs.

When AI is embedded into operational systems, inference becomes a recurring operating line item. It compounds with adoption.

The question isn’t whether inference is expensive.

It’s whether the output justifies the cost.

The Hidden Risk in Enterprise AI

If an AI output is ignored, distrusted, or only partially implemented, the organization still pays the inference bill.

At small scale, that’s experimentation.

At enterprise scale, that becomes recurring spend with limited return.

Many organizations discover this only after moving from pilot to production. Dev environments feel manageable. Production environments—serving real users, longer contexts, tighter latency targets—can multiply inference costs far faster than anticipated.

Adoption without optimization turns inference into a growing operating expense line.

This is where trust becomes economic.

Inference truly delivers maximum ROI when:

- The output is relevant to the real-world environment

- It is explainable to decision-makers

- It is embedded directly into workflows

- It is acted upon

Without those elements, inference becomes cost.

With them, inference becomes leverage.

Why Real-World Context Is Now Infrastructure

Foundation models are extraordinary at language, pattern recognition, and general reasoning.

What they do not inherently own is verified, structured, continuously refreshed real-world context tied to economic demand.

They do not naturally reason about:

- How a concert in Austin shifts rideshare demand by neighborhood and hour

- How overlapping school holidays and weather patterns change grocery volume

- How local economic events propagate into staffing requirements

Large models predict tokens.

Operational systems need grounded, spatial-temporal-economic reasoning.

When models lack verified, structured, continuously refreshed real-world signals, they compensate with longer context windows, more retries, and heavier compute. That increases cost without improving outcomes.

Grounded systems focus inference on causally relevant signals such as events, demand drivers, and spatial and temporal shifts, improving accuracy and allocating compute to signals that materially impact outcomes.

Performance-per-dollar at inference becomes the true optimization metric.

As AI moves from chat interfaces into production decision systems, this gap becomes structural.

The enterprises that close it will see:

- Higher model accuracy

- Greater explainability

- Faster organizational adoption

- Lower wasted inference spend

Inference Is Becoming a Strategic Layer

The next phase of AI isn’t about who trained the biggest model.

It’s about who improves the quality of inference at the point decisions are made.

That’s a different category. It’s less about compute. More about grounding. Less about experimentation. More about production infrastructure.

The companies that help enterprises turn inference from a cost center into a durable operating advantage will sit in a critical position in the AI stack.

As AI adoption accelerates, the compounding effect of better inference quality becomes economically meaningful.

And that is where the real long-term leverage lies.